Don’t get sued by your own AI: a 5-step governance framework for partners

TechnologyFrom data privacy to ‘hallucination liability’, here are some ideas on how to protect your PI insurance, writes Andrew Cooke.

It starts with a routine compliance review. A client's tax position, reviewed and signed off by a senior manager, turns out to be materially wrong. The ATO issues penalties. The client sues.

In discovery, something uncomfortable emerges: the analysis was drafted by an AI tool that no partner had approved, no policy governed, and no one had verified beyond a cursory glance. The tool had "hallucinated" a case reference that didn't exist. The manager had trusted it. The firm had signed off on it.

This isn't hypothetical. It's the scenario keeping risk partners awake in 2026.

"The director's duty of care now extends to the algorithms they deploy." That's where regulatory thinking is heading. When AI touches client work, governance isn't optional. It's a professional obligation.

The risk hiding in plain sight

Most partners assume their firm has AI under control. There's probably a policy somewhere. IT approved a tool last year.

But here's what's actually happening: staff are using AI tools the firm doesn't know about, on client data the firm hasn't secured, to produce work the firm hasn't verified. This is Shadow AI – and it's not fringe behaviour, it's the norm.

Over 60 per cent of accounting staff have used generative AI on client work without explicit firm approval. Most assumed it was fine. Many didn't mention it. Some actively hid it.

The problem isn't that staff are using AI. The problem is that firms have created a vacuum where no clear rules exist – and into that vacuum, risk flows.

Myth: "We've got an IT policy that covers AI."

Reality: IT policies govern software procurement and data security – they don't address who's responsible when AI produces incorrect advice, what disclosure clients receive, or how you verify outputs before they become the firm's outputs. These aren't IT questions. They're governance questions. Most firms haven't answered them.

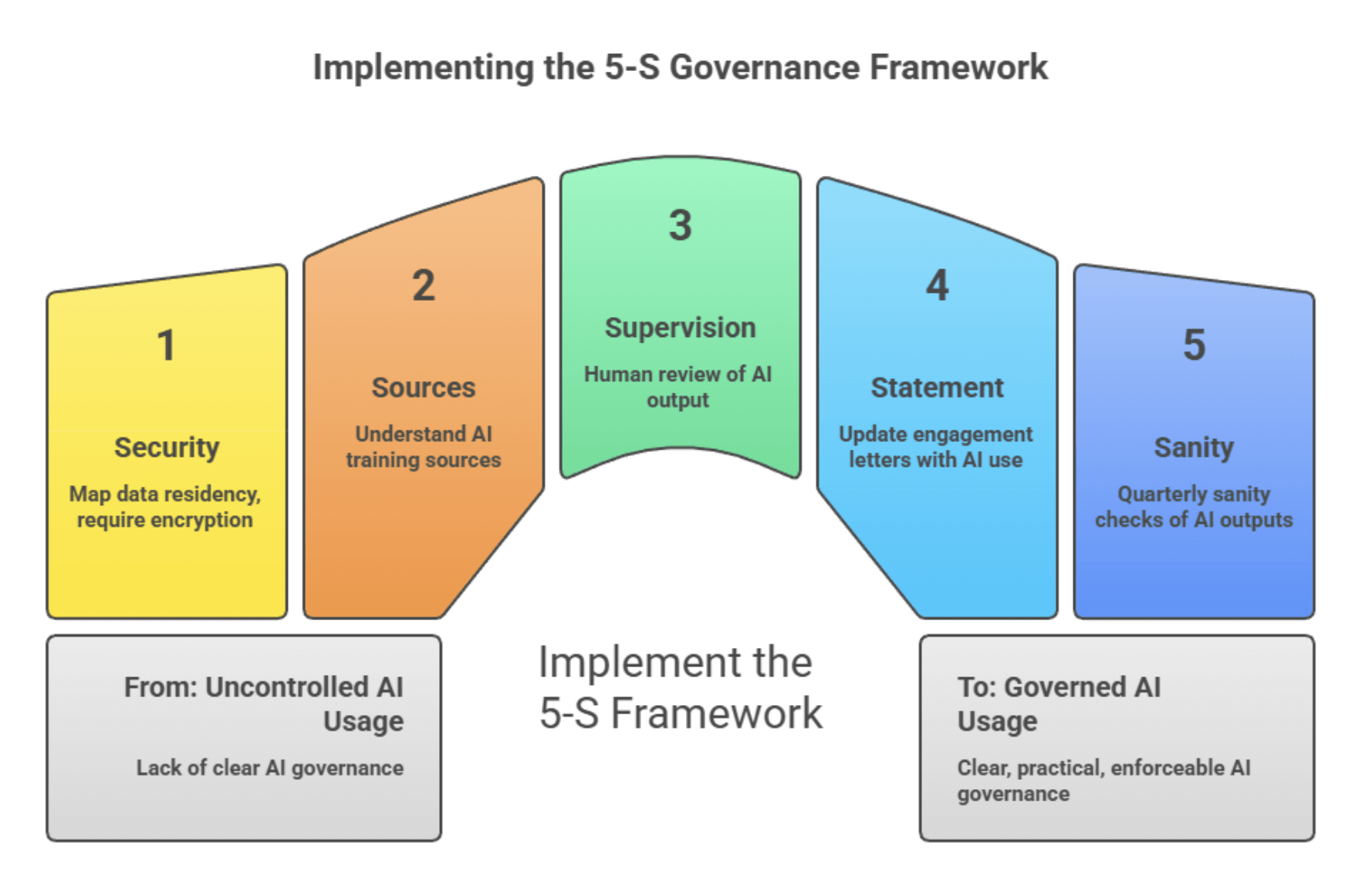

The 5-S governance framework

Governance doesn't need to be bureaucratic. It needs to be clear, practical, and enforceable. The firms getting this right are building around five interlocking controls.

1. Security: Where does the data live?

Every AI tool processes data somewhere. Client data fed into a US-hosted tool may breach Australian privacy principles. Data used to train a model may resurface unexpectedly.

The control: Map every AI tool to its data residency. Require encryption in transit and at rest. If a tool can't demonstrate compliant data handling, it doesn't get used on client work.

2. Sources: Where did the AI learn what it knows?

Generative AI predicts based on training data. A tool trained on US tax law will confidently produce advice that's wrong for Australian clients. A model trained on historical data may embed biases the firm would never consciously endorse.

The control: Before deploying any AI tool on client work, understand its training sources. Ask vendors directly. If they can't answer, that's your answer.

3. Supervision: Is there a human in the loop?

This is the non-negotiable. AI can draft and accelerate. It cannot exercise professional judgement or take responsibility.

The control: Every AI-generated output touching client work must be reviewed by a qualified professional before it leaves the firm. Not skimmed – reviewed. Human-led AI, not AI-led humans.

4. Statement: What do clients know?

If a client discovered AI had been used in their engagement, would they be surprised? Regulators and insurers increasingly expect disclosure. Transparency builds trust. Concealment builds liability.

The control: Update your engagement letters with a clear statement about AI use, what controls are in place, and how professional responsibility is maintained.

5. Sanity: Are you testing what comes out?

AI models drift. They hallucinate. They produce outputs that look confident but are quietly wrong.

The control: Implement quarterly sanity checks. Feed tools known scenarios. Compare outputs against verified answers. Document results. If a tool fails, restrict its use until resolved.

If you can't demonstrate you're testing your AI, you can't demonstrate you're governing it.

The conversation you’re not having

Most partnership meetings about AI ask the wrong question: "What tools should we buy?"

The better question: "What behaviours are we governing?"

The risk isn't in the technology. It's in the gap between what staff are doing and what partners know about. It's in the assumption that someone else has this covered. It's in the policy that exists on paper but not in practice.

AI is 20 per cent technology, 80 per cent psychology. The firms that govern AI well won't be the ones with the best tools. They'll be the ones with the clearest expectations and the discipline to enforce what they've agreed.

The line between innovation and negligence

Every firm wants to be seen as innovative. AI offers genuine opportunities to serve clients faster and more accurately. Those opportunities are real.

But innovation without governance is just reckless gambling with your professional reputation – and your PI insurance.

The question isn't whether to use AI. It's whether you can demonstrate, to a regulator, an insurer, or a courtroom, that you used it responsibly. The partners who thrive won't be the ones who moved fastest. They'll be the ones who moved deliberately – with governance firmly in place before they needed it.

Andrew Cooke is the principal consultant at Growth & Profit Solutions AI.

Want to see more stories from trusted news sources?

Want to see more stories from trusted news sources?Make Accountants Daily a preferred news source on Google.